I/O Standards

Overview

This Apache Beam I/O Standards document lays out the prescriptive guidance for 1P/3P developers developing an Apache Beam I/O connector. These guidelines aim to create best practices encompassing documentation, development and testing in a simple and concise manner.

What are built-in I/O Connectors?

An I/O connector (I/O) living in the Apache Beam Github repository is known as a Built-in I/O connector. Built-in I/O’s have their integration tests and performance tests routinely run by the Google Cloud Dataflow Team using the Dataflow Runner and metrics published publicly for reference. Otherwise, the following guidelines will apply to both unless explicitly stated.

Guidance

Documentation

This section lays out the superset of all documentation that is expected to be made available with an I/O. The Apache Beam documentation referenced throughout this section can be found here. And generally a good example to follow would be the built-in I/O, Snowflake I/O.

Built-in I/O

Provided code docs for the relevant language of the I/O. This should also have links to any external sources of information within the Apache Beam site or external documentation location. Examples: |

Add a new page under I/O connector guides that covers specific tips and configurations. The following shows those for Parquet, Hadoop and others. Examples:

|

Formatting of the section headers in your Javadoc/Pythondoc should be consistent throughout such that programmatic information extraction for other pages can be enabled in the future. Example subset of sections to include in your page in order:

Example: The KafkaIO JavaDoc |

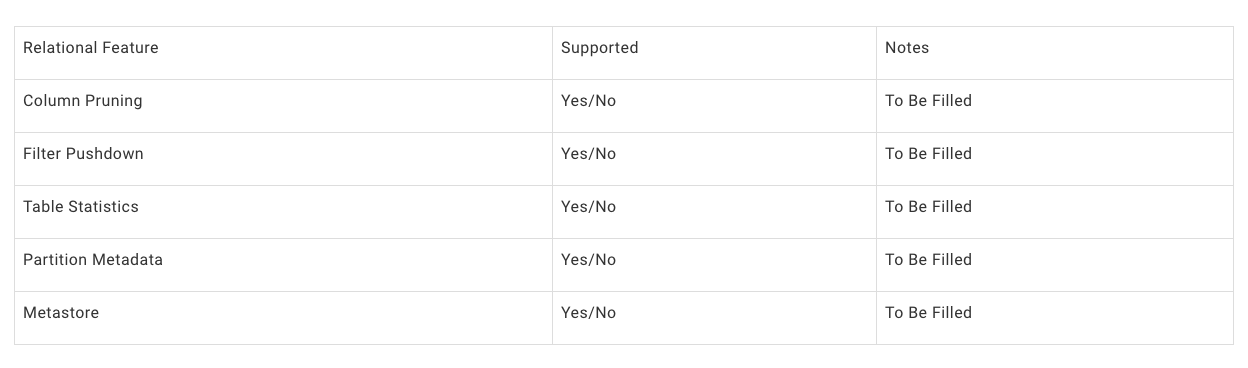

I/O Connectors should include a table under Supported Features subheader that indicates the Relational Features utilized. Relational Features are concepts that can help improve efficiency and can optionally be implemented by an I/O Connector. Using end user supplied pipeline configuration (SchemaIO) and user query (FieldAccessDescriptor) data, relational theory is applied to derive improvements such as faster pipeline execution, lower operation costs and less data read/written. Example table:

Example implementations: BigQueryIO Column Pruning via ProjectionPushdown to return only necessary columns indicated by an end user's query. This is achieved using BigQuery DirectRead API. |

Add a page under Common pipeline patterns, if necessary, outlining common usage patterns involving your I/O. |

Update I/O Connectors with your I/O’s information Example: https://beam.apache.org/documentation/io/connectors/#built-in-io-connectors

|

Provide setup steps to use the I/O, under a Before you start header. Example: https://beam.apache.org/documentation/io/built-in/parquet/#before-you-start |

Include a canonical read/write code snippet after the initial description for each supported language. The below example shows Hadoop with examples for Java. Example: https://beam.apache.org/documentation/io/built-in/hadoop/#reading-using-hadoopformation |

Indicate how timestamps for elements are assigned. This includes batch sources to allow for future I/Os which may provide more useful information than Example: |

Indicate how timestamps are advanced; for Batch sources this will be marked as n/a in most cases. |

Outline any temporary resources (for example, files) that the connector will create. Example: BigQuery batch loads first create a temp GCS location |

Provide, under an Authentication subheader, how to acquire partner authorization material to securely access the source/sink. Example: https://beam.apache.org/documentation/io/built-in/snowflake/#authentication Here BigQuery names it permissions but the topic covers similarities https://beam.apache.org/releases/javadoc/2.1.0/org/apache/beam/sdk/io/gcp/bigquery/BigQueryIO.html |

I/Os should provide links to the Source/Sink documentation within Before you start header. Example: https://beam.apache.org/documentation/io/built-in/snowflake/ |

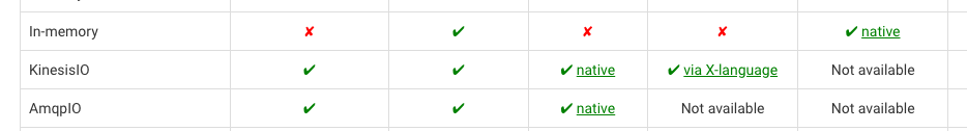

Indicate if there is native or X-language support in each language with a link to the docs. Example: Kinesis I/O has a native implementation of java and X-language support for python but no support for Golang. |

Indicate known limitations under a Limitations header. If the limitation has a tracking issue, please link it inline. Example: https://beam.apache.org/documentation/io/built-in/snowflake/#limitations |

I/O (not built-in)

Custom I/Os are not included in the Apache Beam Github repository. Some examples would be SolaceIO.

Update the Other I/O Connectors for Apache Beam table with your information. |

This section outlines API syntax, semantics and recommendations for features that should be adopted for new as well as existing Apache Beam I/O Connectors.

The I/O Connector development guidelines are written with the following principles in mind:

- Consistency makes an API easier to learn.

- If there are multiple ways of doing something, we should strive to be consistent first

- With a couple minutes of studying documentation, users should be able to pick up most I/O connectors.

- The design of a new I/O should consider the possibility of evolution.

- Transforms should integrate well with other Beam utilities.

All SDKs

Pipeline Configuration / Execution / Streaming / Windowing semantics guidelines

Topic | Semantics |

|---|---|

Pipeline Options | An I/O should rarely rely on a PipelineOptions subclass to tune internal parameters. If neccesary, a connector-related pipeline options class should:

|

Source Windowing | A source must return elements in the GlobalWindow unless explicitly parameterized in the API by the user. Allowable Non-global-window patterns:

|

Sink Windowing | A sink should be Window agnostic and handle elements sent with any Windowing method, unless explicitly parameterized or expressed in its API. A sink may change windowing of a PCollection internally in any way. However, the metadata that it returns as part of its result object must be:

Allowable non-global-window patterns:

|

Throttling | A streaming sink (or any transform accessing an external service) may implement throttling of its requests to prevent from overloading the external service. TODO: Beam should expose throttling utilities (Tracking Issue):

|

Error handling | TODO: Tracking Issue |

Java

General

The primary class used in working with the connector should be named {connector}IO Example: The BigQuery I/O is org.apache.beam.sdk.io.bigquery.BigQueryIO |

The class should be placed in the package org.apache.beam.sdk.io.{connector} Example: The BigQueryIO belongs in the java package org.apache.beam.sdk.io.bigquery |

The unit/integration/performance tests should live under the package org.apache.beam.sdk.io.{connector}.testing. This will cause the various tests to work with the standard user-facing interfaces of the connector. Unit tests should reside in the same package (i.e. org.apache.beam.sdk.io.{connector}), as they may often test internals of the connector. The BigQueryIO belongs in the java package org.apache.beam.sdk.io.bigquery |

An I/O transform should avoid receiving user lambdas to map elements from a user type to a connector-specific type. Instead, they should interface with a connector-specific data type (with schema information when possible). When necessary, an I/O transform should receive a type parameter that specifies the input type (for sinks) or output type (for sources) of the transform. An I/O transform may not have a type parameter only if it is certain that its output type will not change (e.g. FileIO.MatchAll and other FileIO transforms). |

It is highly discouraged to directly expose third-party libraries in the public API part of the I/O Connector for the following reasons:

Instead, we highly recommend exposing Beam-native interfaces and an adaptor that holds mapping logic. If you believe that the library in question is extremely static in nature. Please note it in the I/O itself. |

Source and Sinks should be abstracted with a PTransform wrapper, and internal classes be declared protected or private. By doing so implementation details can be added/changed/modified without breaking implementation by dependencies. |

Classes / Methods / Properties

Java Syntax | Semantics |

|---|---|

class IO.Read | Gives access to the class that represents reads within the I/O. The A user should not create this class directly. It should be created by a top-level utility method. |

class IO.ReadAll | A few different sources implement runtime configuration for reading from a data source. This is a valuable pattern because it enables a purely batch source to become a more sophisticated streaming source. As much as possible, this type of transform should have the type richness of a construction-time-configured transform:

Example: |

class IO.Write | Gives access to the class that represents writes within the I/O. The Write class should implement a fluent interface pattern (e.g. A user should not create this class directly. It should be created by a top-level utility method. |

Other Transform Classes | Some data storage and external systems implement APIs that do not adjust easily to Read or Write semantics (e.g. FhirIO implements several different transforms that fetch or send data to Fhir). These classes should be added only if it is impossible or prohibitively difficult to encapsulate their functionality as part of extra configuration of Read, Write and ReadAll transforms, to avoid increasing the cognitive load on users. A user should not create these classes directly. They should be created by a top-level static method. |

Utility Classes | Some connectors rely on other user-facing classes to set configuration parameters. (e.g. JdbcIO.DataSourceConfiguration). These classes should be nested within the {Connector}IO class. This format makes them visible in the main Javadoc, and easy to discover by users. |

Method IO<T>.write() | The top-level I/O class will provide a static method to start constructing an I/O.Write transform. This returns a PTransform with a single input PCollection, and a Write.Result output. This method should not specify in its name any of the following:

The above should be specified via configuration parameters if possible. If impossible, then a new static method may be introduced, but this must be exceptional. |

Method IO<T>.read() | The method to start constructing an I/O.Read transform. This returns a PTransform with a single output PCollection. This method should not specify in its name any of the following:

The above should be specified via configuration parameters if possible. If not possible, then a new static method may be introduced, but this must be exceptional, and documented in the I/O header as part of the API. The initial static constructor method may receive parameters if these are few and general, or if they are necessary to configure the transform (e.g. FhirIO.exportResourcesToGcs, JdbcIO.ReadWithPartitions needs a TypeDescriptor for initial configuration). |

IO.Read.from(source) | A Read transform must provide a from method where users can specify where to read from. If a transform can read from different kinds of sources (e.g. tables, queries, topics, partitions), then multiple implementations of this from method can be provided to accommodate this:

The input type for these methods can reflect the external source’s API (e.g. Kafka TopicPartition should use a Beam-implemented TopicPartition object). Sometimes, there may be multiple from locations that use the same input type, which means we cannot leverage method overloading. With this in mind, use a new method to enable this situation.

|

IO.Read.fromABC(String abc) | |

IO.Write.to(destination) | A Write transform must provide a to method where users can specify where to write data. If a transform can write to different kinds of sources while still using the same input element type(e.g. tables, queries, topics, partitions), then multiple implementations of this from method can be provided to accommodate this:

The input type for these methods can reflect the external sink's API (e.g. Kafka TopicPartition should use a Beam-implemented TopicPartition object). If different kinds of destinations require different types of input object types, then these should be done in separate I/O connectors. Sometimes, there may be multiple from locations that use the same input type, which means we cannot leverage method overloading. With this in mind, use a new method to enable this situation.

|

IO.Write.to(DynamicDestination destination) | A write transform may enable writing to more than one destination. This can be a complicated pattern that should be implemented carefully (it is the preferred pattern for connectors that will likely have multiple destinations in a single pipeline). The preferred pattern for this is to define a DynamicDestinations interface (e.g. BigQueryIO.DynamicDestinations) that will allow the user to define all necessary parameters for the configuration of the destination. The DynamicDestinations interface also allows maintainers to add new methods over time (with default implementations to avoid breaking existing users) that will define extra configuration parameters if necessary. |

IO.Write.toABC(destination) | |

class IO.Read.withX IO.Write.withX | withX provides a method for configuration to be passed to the Read method, where X represents the configuration to be created. With the exception of generic with statements ( defined below ) the I/O should attempt to match the name of the configuration option with that of the option name in the source. These methods should return a new instance of the I/O rather than modifying the existing instance. Example: |

IO.Read.withConfigObject IO.Write.withConfigObject | Some connectors in Java receive a configuration object as part of their configuration. This pattern is encouraged only for particular cases. In most cases, a connector can hold all necessary configuration parameters at the top level. To determine whether a multi-parameter configuration object is an appropriate parameter for a high level transform, the configuration object must:

Example: JdbcIO.DataSourceConfiguration, SpannerConfig, KafkaIO.Read.withConsumerConfigUpdates |

class IO.Write.withFormatFunction | Discouraged - except for dynamic destinations For sources that can receive Beam Row-typed PCollections, the format function should not be necessary, because Beam should be able to format the input data based on its schema. For sinks providing Dynamic Destination functionality, elements may carry data that helps determine their destination. These data may need to be removed before writing to their final destination. To include this method, a connector should:

|

IO.Read.withCoder IO.Write.withCoder | Strongly Discouraged Sets the coder to use to encode/decode the element type of the output / input PCollection of this connector. In general, it is recommended that sources will:

If nether #1 and #2 are possible, then a |

IO.ABC.withEndpoint / with{IO}Client / withClient | Connector transforms should provide a method to override the interface between themselves and the external system that they communicate with. This can enable various uses: Sets the coder to use to encode/decode the element type of the output / input PCollection of this connector. In general, it is recommended that sources will:

Example: |

Types

Java Syntax | Semantics |

|---|---|

Method IO.Read.expand | The expand method of a Read transform must return a PCollection object with a type. The type may be parameterized or fixed to a class. A user should not create this class directly. It should be created by a top-level utility method. |

Method IO.Read.expand’s PCollection type | The type of the PCollection will usually be one of the following four options. For each of these option, the encoding / data is recommended to be as follows:

In all cases, asking a user to pass a coder (e.g. |

method IO.Write.expand | The expand method of any write transform must return a type IO.Write.Result object that extends a PCollectionTuple. This object allows transforms to return metadata about the results of its writing and allows this write to be followed by other PTransforms. If the Write transform would not need to return any metadata, a Write.Result object is still preferable, because it will allow the transform to evolve its metadata over time. Examples of metadata:

Examples: BigQueryIO’s WriteResult |

Evolution

Over time, I/O need to evolve to address new use cases, or use new APIs under the covers. Some examples of necessary evolution of an I/O:

- A new data type needs to be supported within it (e.g. any-type partitioning in JdbcIO.ReadWithPartitions)

- A new backend API needs to be supported

Java Syntax | semantics |

|---|---|

Top-level static methods | In general, one should resist adding a completely new static method for functionality that can be captured as configuration within an existing method. An example of too many top-level methods that could be supported via configuration is PubsubIO A new top-level static method should only be added in the following cases:

|

Python

General

If the I/O lives in Apache Beam it should be placed in the package apache_beam.io.{connector} or apache_beam.io.{namespace}.{connector} Example: apache_beam.io.fileio and apache_beam.io.gcp.bigquery |

There will be a module named {connector}.py which is the primary entry point used in working with the connector in a pipeline apache_beam.io.{connector} or apache_beam.io.{namespace}.{connector} Example: apache_beam.io.gcp.bigquery / apache_beam/io/gcp/bigquery.py Another possible layout: apache_beam/io/gcp/bigquery/bigquery.py (automatically import public classes in bigquery/__init__.py) |

The connector must define an |

If the I/O implementation exists in a single module (a single file), then the file {connector}.py can hold it. Otherwise, the connector code should be defined within a directory (connector package) with an __init__.py file that documents the public API. If the connector defines other files containing utilities for its implementation, these files must clearly document the fact that they are not meant to be a public interface. |

Classes / Methods / Properties

Python Syntax | semantics |

|---|---|

callable ReadFrom{Connector} | This gives access to the PTransform to read from a given data source. It allows you to configure it via the arguments that it receives. For long lists of optional parameters, they may be defined as parameters with a default value. Q. Java uses a builder pattern. Why can’t we do that in Python? Optional parameters can serve the same role in Python. Example: |

callable ReadAllFrom{Connector} | A few different sources implement runtime configuration for reading from a data source. This is a valuable pattern because it enables a purely batch source to become a more sophisticated streaming source. As much as possible, this type of transform should have the type richness and safety of a construction-time-configured transform:

Example: |

callable WriteTo{Connector} | This gives access to the PTransform to write into a given data sink. It allows you to configure it via the arguments that it receives. For long lists of optional parameters, they may be defined as parameters with a default value. Q. Java uses a builder pattern. Why can’t we do that in Python? Optional parameters can serve the same role in Python. Example: |

callables Read/Write | A top-level transform initializer (ReadFromIO/ReadAllFromIO/WriteToIO) must aim to require the fewest possible parameters, to simplify its usage, and allow users to use them quickly. |

parameter ReadFrom{Connector}({source}) parameter WriteTo{Connector}({sink}) | The first parameter in a Read or Write I/O connector must specify the source for readers or the destination for writers. If a transform can read from different kinds of sources (e.g. tables, queries, topics, partitions), then the suggested approaches by order of preference are:

|

parameter WriteToIO(destination={multiple_destinations}) | A write transform may enable writing to more than one destination. This can be a complicated pattern that should be implemented carefully (it is the preferred pattern for connectors that will likely have multiple destinations in a single pipeline). The preferred API pattern in Python is to pass callables (e.g. WriteToBigQuery) for all parameters that will need to be configured. In general, examples of callable parameters may be:

Using these callables also allows maintainers to add new parameterizable callables over time (with default values to avoid breaking existing users) that will define extra configuration parameters if necessary. Corner case: It is often necessary to pass side inputs to some of these callables. The recommended pattern is to have an extra parameter in the constructor to include these side inputs (e.g. WriteToBigQuery’s table_side_inputs parameter) |

parameter ReadFromIO(param={param_val}) parameter WriteToIO(param={param_val}) | Any additional configuration can be added as optional parameters in the constructor of the I/O. Whenever possible, mandatory extra parameters should be avoided. Optional parameters should have reasonable default values, so that picking up a new connector will be as easy as possible. |

parameter ReadFromIO(config={config_object}) | Discouraged Some connectors in Python may receive a complex configuration object as part of their configuration. This pattern is discouraged, because a connector can hold all necessary configuration parameters at the top level. To determine whether a multi-parameter configuration object is an appropriate parameter for a high level transform, the configuration object must:

|

Types

Python Syntax | Semantics |

|---|---|

Output of method ReadFromIO.expand | The expand method of a Read transform must return a PCollection object with a type, and be annotated with the type. Preferred PCollection types in Python are (in order of preference): Simple Python types if the (bytes, str, numbers) For complex types:

|

Output of method WriteToIO.expand | The expand method of any write transform must return a Python object with a fixed class type. The recommended name for the class is WriteTo{IO}Result. This object allows transforms to return metadata about the results of its writing. If the Write transform would not need to return any metadata, a Python object with a class type is still preferable, because it will allow the transform to evolve its metadata over time. Examples of metadata:

Example: BigQueryIO’s WriteResult Motivating example (a bad pattern): WriteToBigQuery’s inconsistent dictionary results[1][2] |

Input of method WriteToIO.expand | The expand method of a Write transform must return a PCollection object with a type, and be annotated with the type. Preferred PCollection types in Python are the same as the output types for a ReadFromIO referenced in T1. |

GoLang

General

If the I/O lives in Apache Beam it should be placed in the package: {connector}io Example: avroio and bigqueryio |

Integration and Performance tests should live under the same package as the I/O itself {connector}io |

Typescript

Classes / Methods / Properties

Typescript Syntax | Semantics |

|---|---|

function readFromXXX | The method to start constructing an I/O.Read transform. |

function writeToXXX | The method to start constructing an I/O.Write transform. |

Testing

An I/O should have unit tests, integration tests, and performance tests. In the following guidance we explain what each type of test aims to achieve, and provide a baseline standard of test coverage. Do note that the actual test cases and business logic of the actual test would vary depending on specifics of each source/sink but we have included some suggested test cases as a baseline.

This guide complements the Apache Beam I/O transform testing guide by adding specific test cases and scenarios. For general information regarding testing Beam I/O connectors, please refer to that guide.

Integration and performance tests should live under the package org.apache.beam.sdk.io.{connector}.testing. This will cause the various tests to work with the standard user-facing interfaces of the connector. Unit tests should reside in the same package (i.e. org.apache.beam.sdk.io.{connector}), as they may often test internals of the connector. |

Unit Tests

I/O unit tests need to efficiently test the functionality of the code. Given that unit tests are expected to be executed many times over multiple test suites (for example, for each Python version) these tests should execute relatively fast and should not have side effects. We recommend trying to achieve 100% code coverage through unit tests.

When possible, unit tests are favored over integration tests due to faster execution time and low resource usage. Additionally, unit tests can be easily included in pre-commit tests suites (for example, Jenkins beam_PreCommit_* test suites) hence has a better chance of discovering regressions early. Unit tests are also preferred for error conditions.

The unit testing class should be part of the same package as the IO and named {connector}IOTest. Example: sdks/java/io/cassandra/src/test/java/org/apache/beam/sdk/io/cassandra/CassandraIOTest.java |

Suggested Test Cases

Functionality to test | Description | Example(s) |

|---|---|---|

Reading with default options | Preferably runs a pipeline locally using DirectRunner and a fake of the data store. But can be a unit test of the source transform using mocks. | |

Writing with default options | Preferably runs a pipeline locally using DirectRunner and a fake of the data store. But can be a unit test of the sink transform using mocks. | |

Reading with additional options | For every option available to users. | |

Writing with additional options | For every option available to users. For example, writing to dynamic destinations. | |

Reading additional element types | If the data store read schema supports different data types. | |

Writing additional element types | If the data store write schema supports different data types. | |

Display data | Tests that the source/sink populates display data correctly. | AvroIOTest.testReadDisplayData DatastoreV1Test.testReadDisplayData bigquery_test.TestBigQuerySourcetest_table_reference_display_data |

Initial splitting | There can be many variations of these tests. Please refer to examples for details. | |

Dynamic work rebalancing | There can be many variations of these tests. Please refer to examples for details. | BigTableIOTest.testReadingSplitAtFractionExhaustive avroio_test.AvroBase.test_dynamic_work_rebalancing_exhaustive |

Schema support | Reading a PCollection<Row> or writing a PCollection<Row> Should verify retrieving schema from a source, and pushing/verifying the schema for a sink. | |

Validation test | Tests that source/sink transform is validated correctly, i.e. incorrect/incompatible configurations are rejected with actionable errors. | |

Metrics | Confirm that various read/write metrics get set | |

Read All | Test read all (PCollection<Read Config>) version of the test works | |

Sink batching test | Make sure that sinks batch data before writing if the sinks performace batching for performance reasons. | |

Error handling | Make sure that various errors (for example, HTTP error codes) from a data store are handled correctly | |

Retry policy | Confirms that the source/sink retries requests as expected | |

Output PCollection from a sink | Sinks should produce a PCollection that subsequent steps could depend on. | |

Backlog byte reporting | Tests to confirm that the unbounded source transforms report backlog bytes correctly. | KinesisReaderTest.getSplitBacklogBytesShouldReturnBacklogUnknown |

Watermark reporting | Tests to confirm that the unbounded source transforms report the watermark correctly. | WatermarkPolicyTest.shouldAdvanceWatermarkWithTheArrivalTimeFromKinesisRecords |

Integration Tests

Integration tests test end-to-end interactions between the Beam runner and the data store a given I/O connects to. Since these usually involve remote RPC calls, integration tests take a longer time to execute. Additionally, Beam runners may use more than one worker when executing integration tests. Due to these costs, an integration test should only be implemented when a given scenario cannot be covered by a unit test.

Implementing at least one integration test that involves interactions between Beam and the external storage system is required for submission. |

I/O connectors that involve both source and a sink, Beam guide recommends implementing tests in the write-then-read form so that both read and write can be covered by the same test pipeline. |

The integration testing class should be part of the same package as the I/O and named {connector}IOIT. For example: sdks/java/io/cassandra/src/test/java/org/apache/beam/sdk/io/cassandra/CassandraIOIT.java |

Suggested Test Cases

Test type | Description | Example(s) |

|---|---|---|

“Write then read” test using Dataflow | Writes generated data to the datastore and reads the same data back from the datastore using Dataflow. | |

“Write then read all” test using Dataflow | Same as “write then read” but for sources that support reading a PCollection of source configs. All future (SDF) sources are expected to support this. If the same transform is used for “read” and “read all” forms or of the two transforms are essentially the same (for example, read transform is a simple wrapper of the read all or vise versa) just adding a single “read all” test should be sufficient. | |

Unbounded write then read using Dataflow | A pipeline that continuously writes and reads data. Such a pipeline should be canceled to verify the results. This is only for connectors that support unbounded read. |

Performance Tests

Because the Performance testing framework is still in flux, performance tests can be a follow-up submission after the actual I/O code.

The Performance testing framework does not yet support GoLang or Typescript.

Performance benchmarks are a critical part of best practices for I/Os as they effectively address several areas:

- To evaluate if the cost and performance of a specific I/O or dataflow template meets the customer’s business requirements.

- To illustrate performance regressions and improvements to I/O or dataflow templates between code changes.

- To help end customers estimate costs and plan capacity to meet their SLOs.

Dashboard

Google runs performance tests routinely for built-in I/Os and publishes them to an externally viewable dashboard for Java and Python.

Guidance

Use the same tests for integration and performance tests when possible. Performance tests are usually the same as an integration test but involve a larger volume of data. Testing frameworks (internal and external) provide features to track performance benchmarks related to these tests and provide dashboards/tooling to detect anomalies. |

Include a Resource Scalability section into your page under Built-in I/O connector guides documentation which will indicate the upper bounds which the IO has integration tests for. For example: An indication that KafkaIO has integration tests with xxxx topics. The documentation can state if the connector authors believe that the connector can scale beyond the integration test number, however this will make it clear to the user the limits of the tested paths. The documentation should clearly indicate the configuration that was followed for the limits. For example using runner x and configuration option a. |

Document the performance / internal metrics that your I/O collects including what they mean, and how they can be used (some connectors collect and publish performance metrics like latency/bundle size/etc) |

Include expected performance characteristics of the I/O based on performance tests that the connector has in place. |

Last updated on 2026/05/22

Have you found everything you were looking for?

Was it all useful and clear? Is there anything that you would like to change? Let us know!